Howdy, of us—I’m Lee, and I do all of the server admin stuff for House Metropolis Climate. I don’t submit a lot—the final time was again in 2020—however the web site has simply gone by way of a reasonably huge structure change, and I believed it was time for an replace. If you happen to’re in any respect within the {hardware} and software program that makes House Metropolis Climate work, then this submit is for you!

If that sounds lame and nerdy and also you’d reasonably hear extra about this June’s debilitating warmth wave, then worry not—Eric and Matt will probably be again tomorrow morning to let you know all about how a lot it sucks exterior proper now. (Spoiler alert: it sucks an entire lot.)

The outdated setup: bodily internet hosting and complicated software program

For the previous few years, House Metropolis Climate has been operating on a bodily devoted server at Liquid Net’s Michigan datacenter. We’ve utilized an internet stack made up of three main elements: HAProxy for SSL/TLS termination, Varnish for native cache, and Nginx (with php-fpm) for serving up WordPress, which is the precise utility that generates the location’s pages so that you can learn. (If you happen to’d like a extra detailed clarification of what these purposes do and the way all of them match collectively, this submit from a few years in the past has you coated.) Then, in between you guys and the server sits a service referred to as Cloudflare, which soaks up a lot of the load from guests by serving up cached pages to of us.

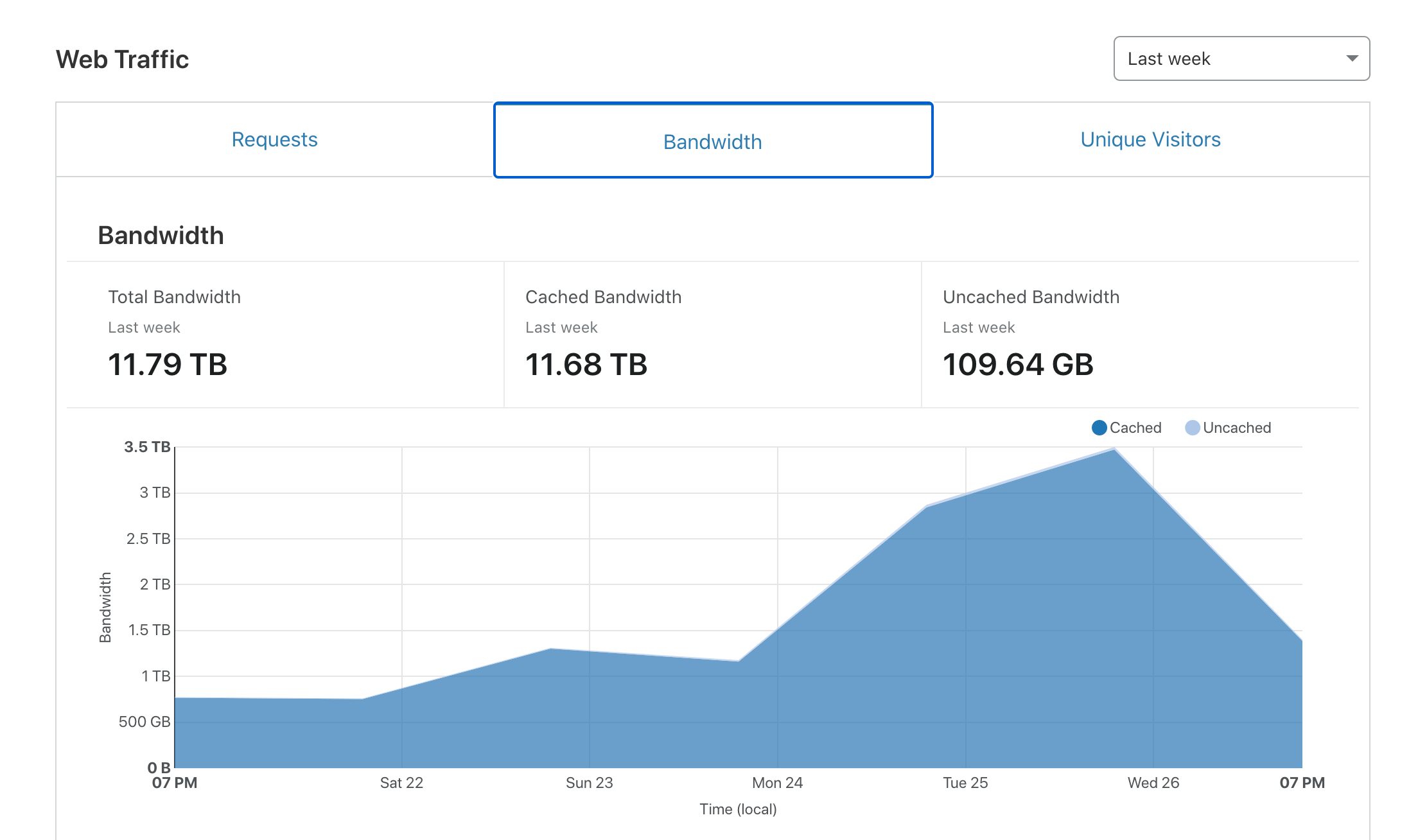

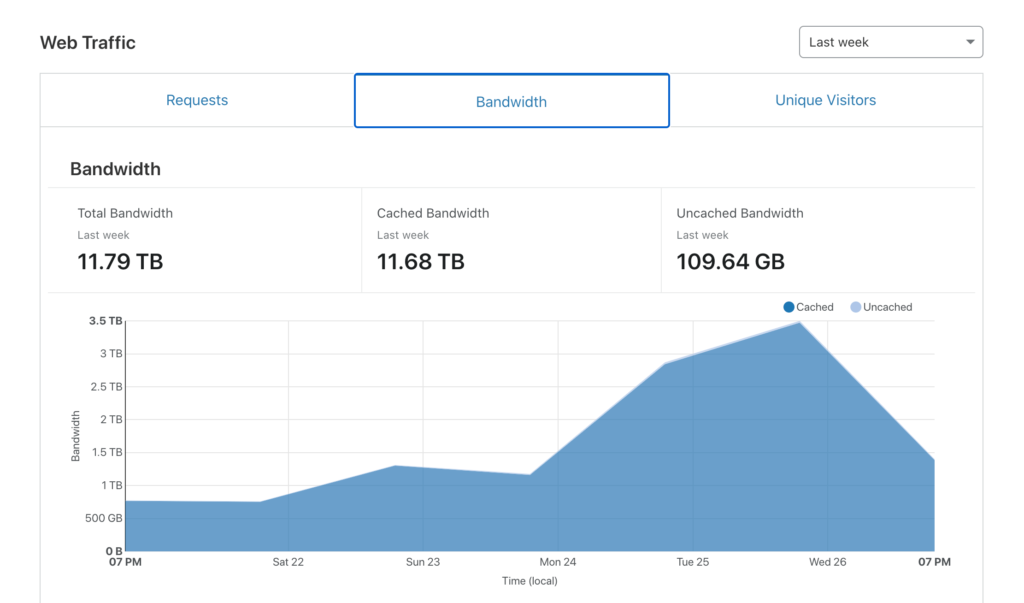

It was a resilient and bulletproof setup, and it acquired us by way of two huge climate occasions (Hurricane Harvey in 2017 and Hurricane Laura in 2020) and not using a single hiccup. However right here’s the factor—Cloudflare is especially wonderful at its major job, which is absorbing community load. The truth is, it’s so good at it that in our main climate occasions, Cloudflare did virtually all of the heavy lifting.

With Cloudflare consuming virtually the entire load, our fancy server spent most of its time idling. On one hand, this was good, as a result of it meant we had an amazing quantity of reserve capability, and reserve capability makes the cautious sysadmin inside me very comfortable. However, extra reserve capability and not using a plan to make the most of it’s only a fancy means of spending internet hosting {dollars} with out realizing any return, and that’s not nice.

Plus, the onerous reality is that the SCW net stack, bulletproof although it might be, was in all probability extra complicated than it wanted to be for our particular use case. Having each an on-box cache (Varnish) and a CDN-type cache (Cloudflare) generally made troubleshooting issues an enormous ache within the butt, since a number of cache layers means a number of issues it’s good to be sure that are correctly bypassed earlier than you begin digging in in your challenge.

Between the associated fee and the complexity, it was time for a change. So we modified!

Leaping into the clouds, lastly

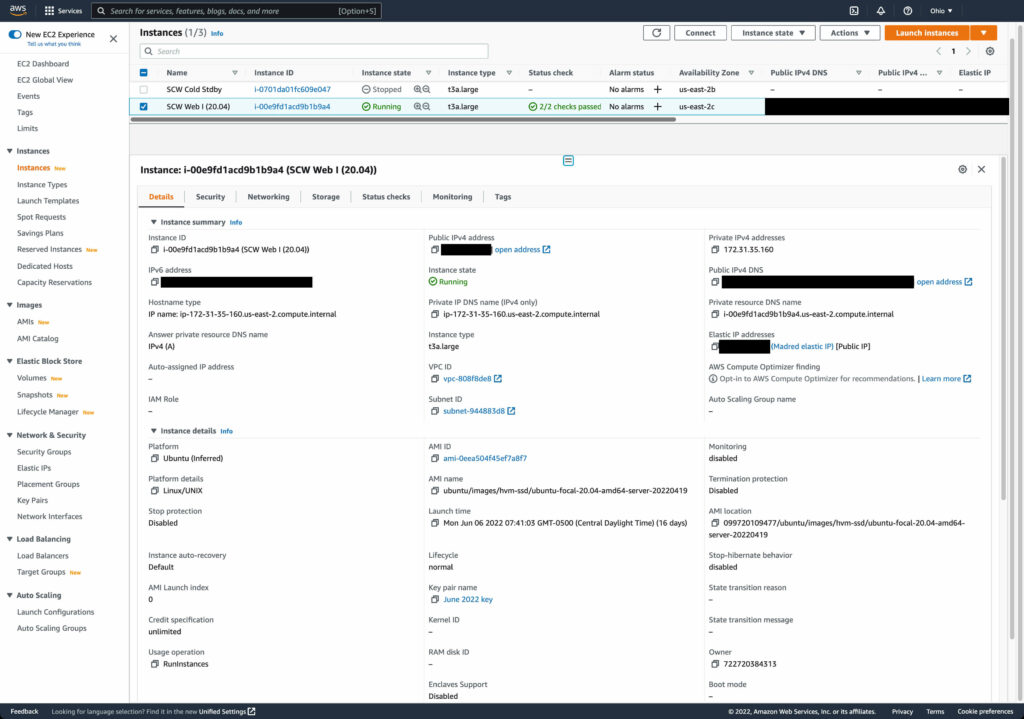

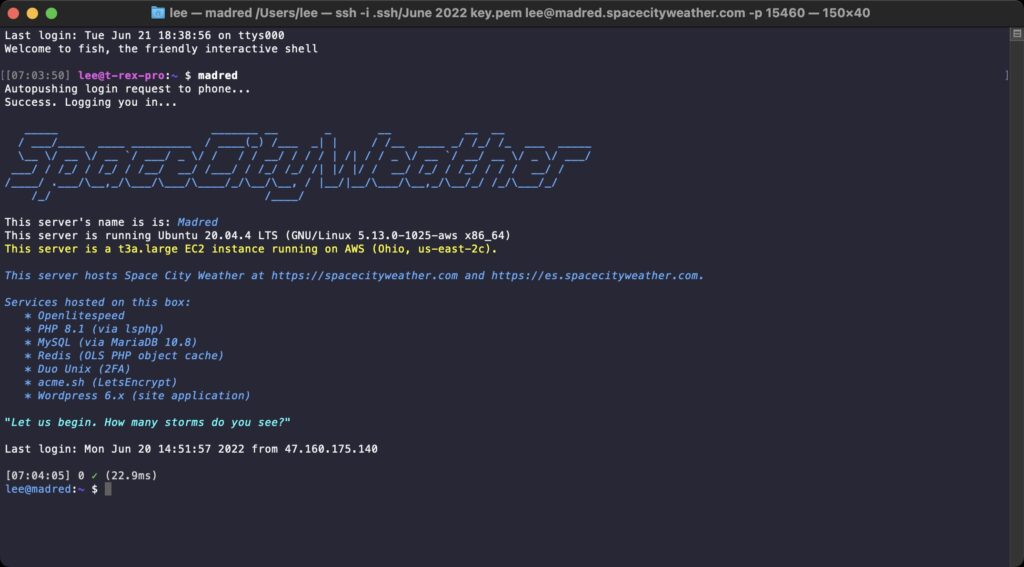

As of Monday, June 6, SCW has been hosted not on a bodily field in Michigan, however on AWS. Extra particularly, we’ve migrated to an EC2 occasion, which provides us our personal cloud-based digital server. (Don’t fear if “cloud-based digital server” appears like geek buzzword mumbo-jumbo—you don’t need to know or care about any of this to be able to get the every day climate forecasts!)

Making the change from bodily to cloud-based digital buys us an amazing quantity of flexibility, since if we ever have to, I can add extra assets to the server by altering the settings reasonably than by having to name up Liquid Net and organize for an outage window wherein to do a {hardware} improve. Extra importantly, the digital setup is significantly cheaper, chopping our yearly internet hosting invoice by one thing like 80 %. (For the curious and/or the technically minded, we’re benefiting from EC2 reserved occasion pricing to pre-buy EC2 time at a considerable low cost.)

On high of controlling prices, going digital and cloud-based offers us a a lot better set of choices for a way we are able to do server backups (out with rsnapshot, in with actual-for-real block-based EBS snapshots!). This could make it massively simpler for SCW to get again on-line from backups if something ever does go flawed.

The one potential “gotcha” with this minimalist digital strategy is that I’m not benefiting from the instruments AWS offers to do true excessive availability internet hosting—primarily as a result of these instruments are costly and would obviate most or the entire financial savings we’re presently realizing over bodily internet hosting. The one conceivable outage scenario we’d have to recuperate from can be an AWS availability zone outage—which is uncommon, however undoubtedly occurs sometimes. To protect towards this chance, I’ve acquired a second AWS occasion in a second availability zone on chilly standby. If there’s an issue with the SCW server, I can spin up the chilly standby field inside minutes and we’ll be good to go. (That is an oversimplified clarification, but when I sit right here and describe our catastrophe restoration plan intimately, it’ll put everybody to sleep!)

Simplifying the software program stack

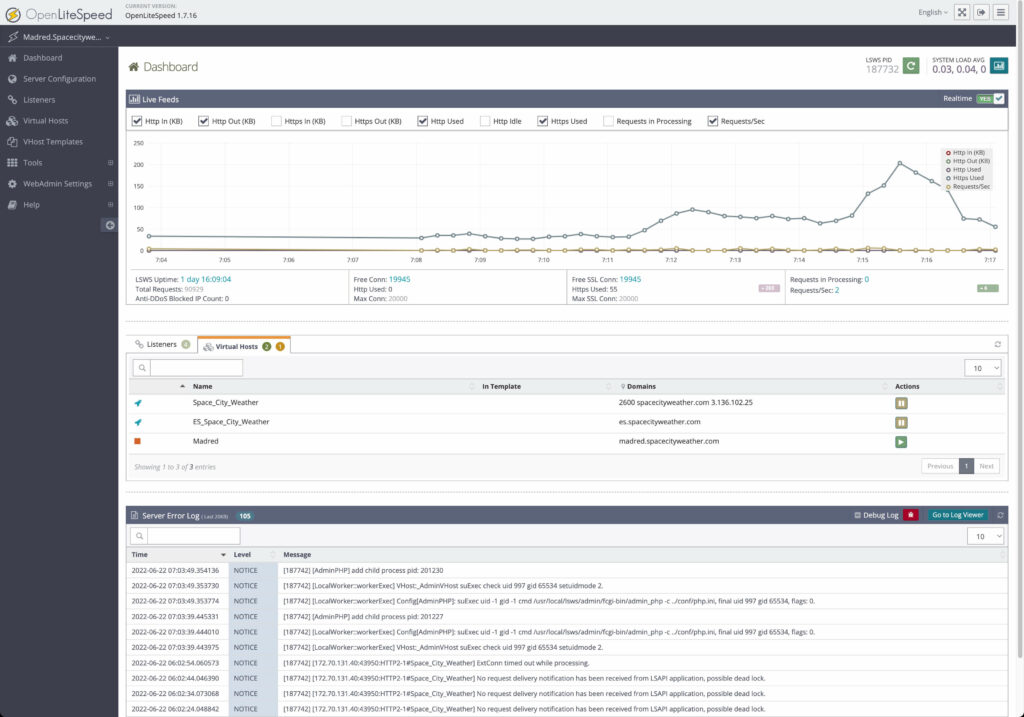

Together with the internet hosting swap, we’ve re-architected our net server’s software program stack with a watch towards simplifying issues whereas holding the location responsive and fast. To that finish, we’ve jettisoned our outdated trio of HAProxy, Varnish, and Nginx and settled as a substitute on an all-in-one net server utility with built-in cacheing, referred to as OpenLiteSpeed.

OpenLiteSpeed (“OLS” to its buddies) is the libre model of LiteSpeed Net Server, an utility which has been getting an increasing number of consideration as a super-quick and super-friendly different to conventional net servers like Apache and Nginx. It’s presupposed to be faster than Nginx or Varnish in lots of efficiency regimes, and it appeared like a fantastic single-app candidate to interchange our complicated multi-app stack. After testing it on my private web site, SCW took the plunge.

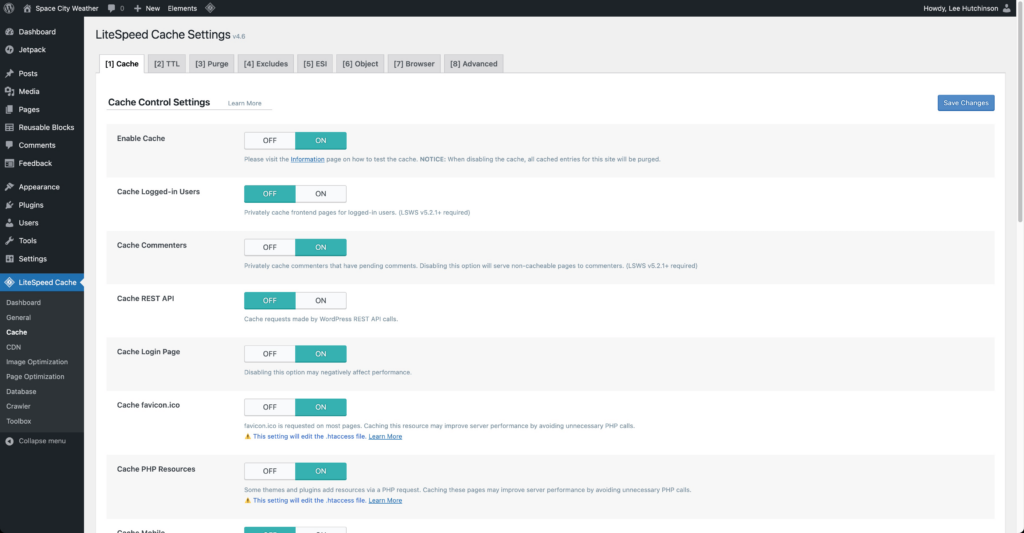

There have been a couple of configuration rising pains (eagle-eyed guests may need seen a few small server hiccups over the previous week or two as I’ve been tweaking settings), however to this point the change is proving to be a vastly optimistic one. OLS has wonderful integration with WordPress by way of a highly effective plugin that exposes a ton of superior configuration choices, which in flip lets us tune the location in order that it really works precisely the way in which we wish it to work.

Wanting towards the longer term

Eric and Matt and Maria put in numerous effort and time to ensure the forecasting they convey you is as dependable and hype-free as they will make it. In that very same spirit, the SCW backend crew (which to this point is me and app designer Hussain Abbasi, with Dwight Silverman performing as mission supervisor) attempt to make sensible, accountable tech selections in order that Eric’s and Matt’s and Maria’s phrases attain you as rapidly and reliably as potential, come rain or shine or heatwave or hurricane.

I’ve been dwelling right here in Houston for each one among my 43 years on this Earth, and I’ve acquired the identical visceral first-hand information lots of you might have about what it’s wish to stare down a tropical cyclone within the Gulf. When a climate occasion occurs, a lot of Houston turns to House Metropolis Climate for solutions, and that degree of duty is each horrifying and humbling. It’s one thing all of us take very significantly, and so I’m hopeful that the adjustments we’ve made to the internet hosting setup will serve guests effectively because the summer season rolls on into the hazard months of August and September.

So cheers, everybody! I want us all a 2022 crammed with nothing however calm winds, nice seas, and a complete lack of hurricanes. And if Mom Nature does resolve to fling one at us, effectively, Eric and Matt and Maria will discuss us all by way of what to do. If I’ve executed my job proper, nobody must take into consideration the servers and purposes buzzing alongside behind the scenes holding the location operational—and that’s precisely how I like issues to be 🙂